🧰OpenAI’s superapp shift with Codex update

OpenAI has updated its Codex platform, shifting it from a coding tool to a cohesive app with background computer use and parallel agents.

- —Background computer use: Codex can operate any Mac app on its own, with several agents able to work at once, even in apps without APIs.

- —Memory & Automation: New memory features retain preferences across sessions, while automations let Codex resume long-running tasks days later.

- —In-app browser & UI: An Atlas-powered browser allows markup for Codex, while inline image generation creates mockups within the app.

- —Rapid growth: Codex has reached 3 million weekly users with 70% month-over-month growth as it aims to become an AI 'superapp'.

Why it matters: This is OpenAI's direct answer to Anthropic's Claude Code, marking a strategic shift toward an AI-first operating environment.

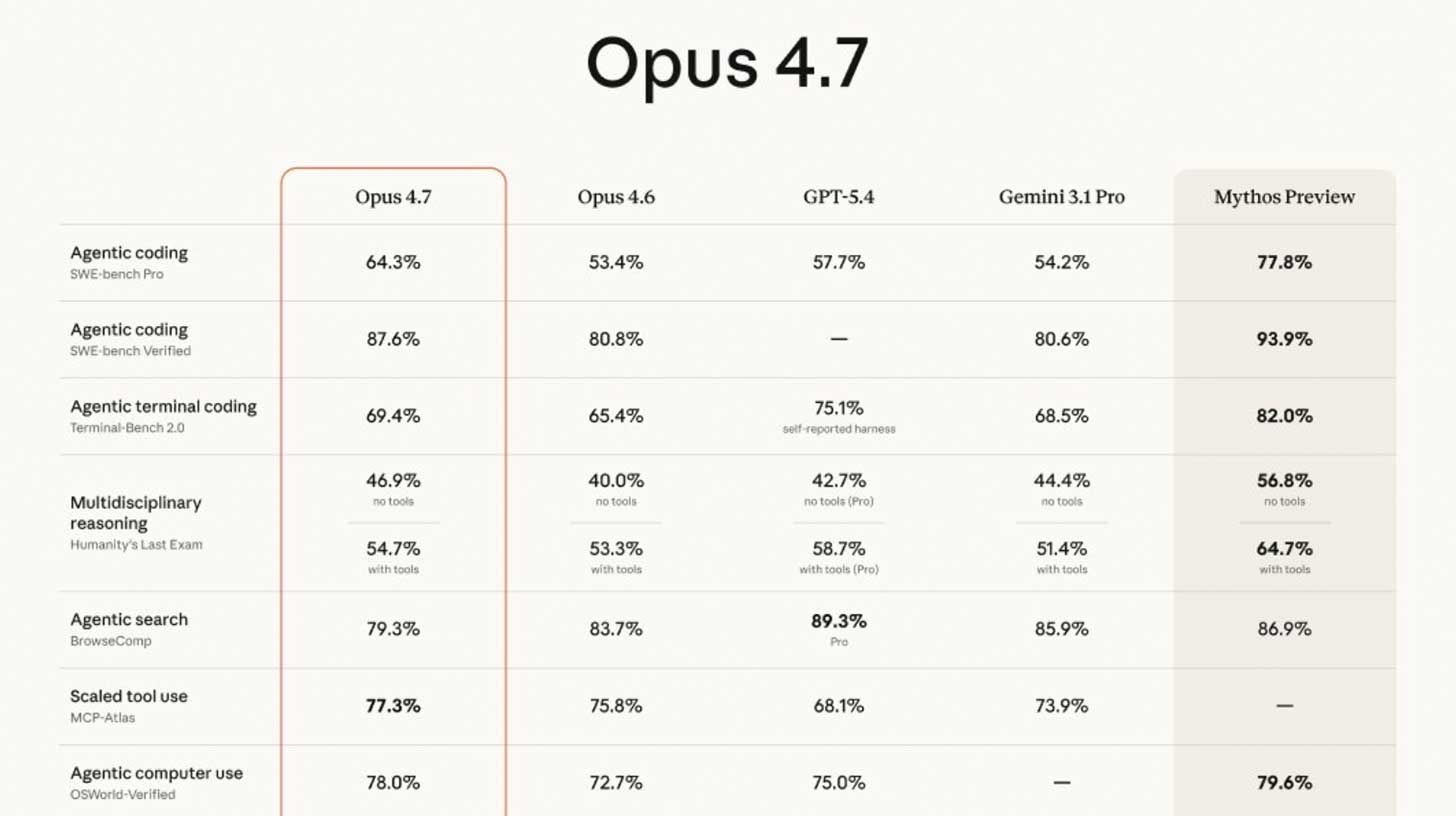

⚙️ Anthropic's Opus 4.7 tops rivals, trails Mythos

Anthropic has released Claude Opus 4.7, its new top-tier model that outperforms GPT-5.4 on agentic coding benchmarks.

- —Benchmark leap: Opus 4.7 scored 64.3% on SWE-bench Pro, up from 4.6's 53.4%, setting a new public standard.

- —Pricing & Usage: While API pricing remains the same, the model consumes tokens significantly faster than its predecessor.

- —Advanced tools: New features include an '/ultrareview' command for bug detection and an 'xhigh' effort mode for developers.

- —Strategic split: Anthropic maintains a fast public release cycle while keeping its most powerful 'Mythos' model for exclusive partners.

Why it matters: Anthropic is accelerating its release cadence to challenge OpenAI's dominance, effectively running a two-track strategy for public vs. frontier models.

🦙Run an LLM on your laptop for free with Ollama

Learn how to run powerful AI models locally on your machine with no subscriptions, accounts, or data leaving your computer.

- —Easy setup: Download the installer from ollama.com for Mac, Linux, or Windows and launch the application.

- —Model selection: Choose lightweight models like gemma3 that can run smoothly on laptops with as little as 8GB of RAM.

- —Privacy first: Models run entirely offline, ensuring complete data privacy and zero cost per interaction.

- —Pro versatility: Use the Ollama API to integrate models with web tools or provide a free backend for coding agents.

Why it matters: Local deployment democratizes access to AI, providing a private, cost-free alternative to cloud-based services.

🧬OpenAI’s first science domain-specific model

OpenAI has launched GPT-Rosalind, a new series for drug discovery and biological research, following its recent cybersecurity release.

- —Scientific reasoning: Rosalind can read papers, query lab databases, and generate biological hypotheses autonomously.

- —Superior performance: The model shows massive gains in biochemistry and experiment design benchmarks compared to GPT-5.4.

- —Expert-level output: In RNA prediction tests, Rosalind's performance exceeded that of 95% of human scientists.

- —Enterprise rollout: Early access is being granted to partners like Amgen and Moderna to accelerate actual drug discovery workflows.

Why it matters: OpenAI is moving beyond 'one-size-fits-all' models, building purpose-built 'specialists' to solve industry-specific bottlenecks.