⚖️ Supreme Court ducks AI copyright question

The U.S. Supreme Court formally declined to hear arguments regarding the most significant case explicitly disputing the validity of extending copyright structures wrapping AI-generated creations. This sweeping inaction upholds subsequent lower-tier directives vehemently determining that sole authorship designation permanently rests solely upon biological humans—punting an era-defining generative IP conundrum.

- —This landmark legal collision revolves exclusively around Stephen Thaler, an engineer who successfully established the generalized "DABUS" infrastructure and doggedly pursued official copyright underwriting directly crediting his model back in 2018

- —Predictably, The Copyright Office categorically dismissed the inquiry. By 2023, a commanding federal judge codified human presence as an immutable "bedrock requirement", which found swift validation from the governing DC Circuit

- —Surprisingly, the DOJ functionally corroborated The Copyright Office operations, officially asserting via court documentation that baseline copyright architectures were purposefully designed nurturing human creation rather than automated machinations

- —However, the overarching appeals board distinctly indicated Thaler explicitly possessed the alternative freedom to claim personal stewardship instead of actively deferring to the AI, slyly hinting that the legislative door isn’t radically locked concerning human-supervised AI augmentations

Why it matters: Documenting an AI churning functional art outputs years preceding mainstream gen-art explosions proves utterly wild. Yet, deferring comprehensive legislative engagement creates deeply awkward realities considering AI synthetic material has thoroughly flooded daily creative arteries. Immensely capitalized studios practically guarantee robust legal confrontations escalating this arbitrary perimeter permanently.

🧠Anthropic wants your ChatGPT memories

Anthropic strategically unveiled an aggressively weaponized porting pipeline authorizing subscribers to instantly transplant their painstakingly cultivated contextual preferences stemming from competing foundational AI ecosystems via an absurdly simple copy-paste upload framework. This drops simultaneously bridging a profound migratory registration wave catalyzed alongside OpenAI's grueling Pentagon crossfire delays.

- —Migrating users simply submit a provided diagnostic prompt targeting their native legacy chatbot, sequentially transferring the raw output architecture straight into their pristine Claude account ensuring entire integration initializes inside strict 24-hour windows

- —The extraction interface comprehensively bundles and transitions meticulous personal instructions, detailed backend project parameters, and entrenched behavioral boundaries siphoning from overarching Gemini, ChatGPT, and Copilot installations in one definitive deployment

- —Reconfiguring overarching access policies, Anthropic fundamentally unleashed explicit long-term memory functions covering standard free-tier users historically, guaranteeing ubiquitous foundational recall access spanning continuous rolling conversations

- —Expanding its robust developer toolchains, Claude Code received significant auto-memory augments natively authorizing the autonomous preservation encompassing specific coding environments, distinctive debugging loops, and individualized workflow paradigms spanning scattered sessions silently

Why it matters: Cross-platform memory consolidation tools structurally represent aggressive customer acquisition methodologies. Deploying an effortless exodus pathway actively capitalizing onto intense pro-corporate public momentum sparked during their high-profile Pentagon refusal translates brilliant viral sympathy into permanent, high-barrier retention moats effectively.

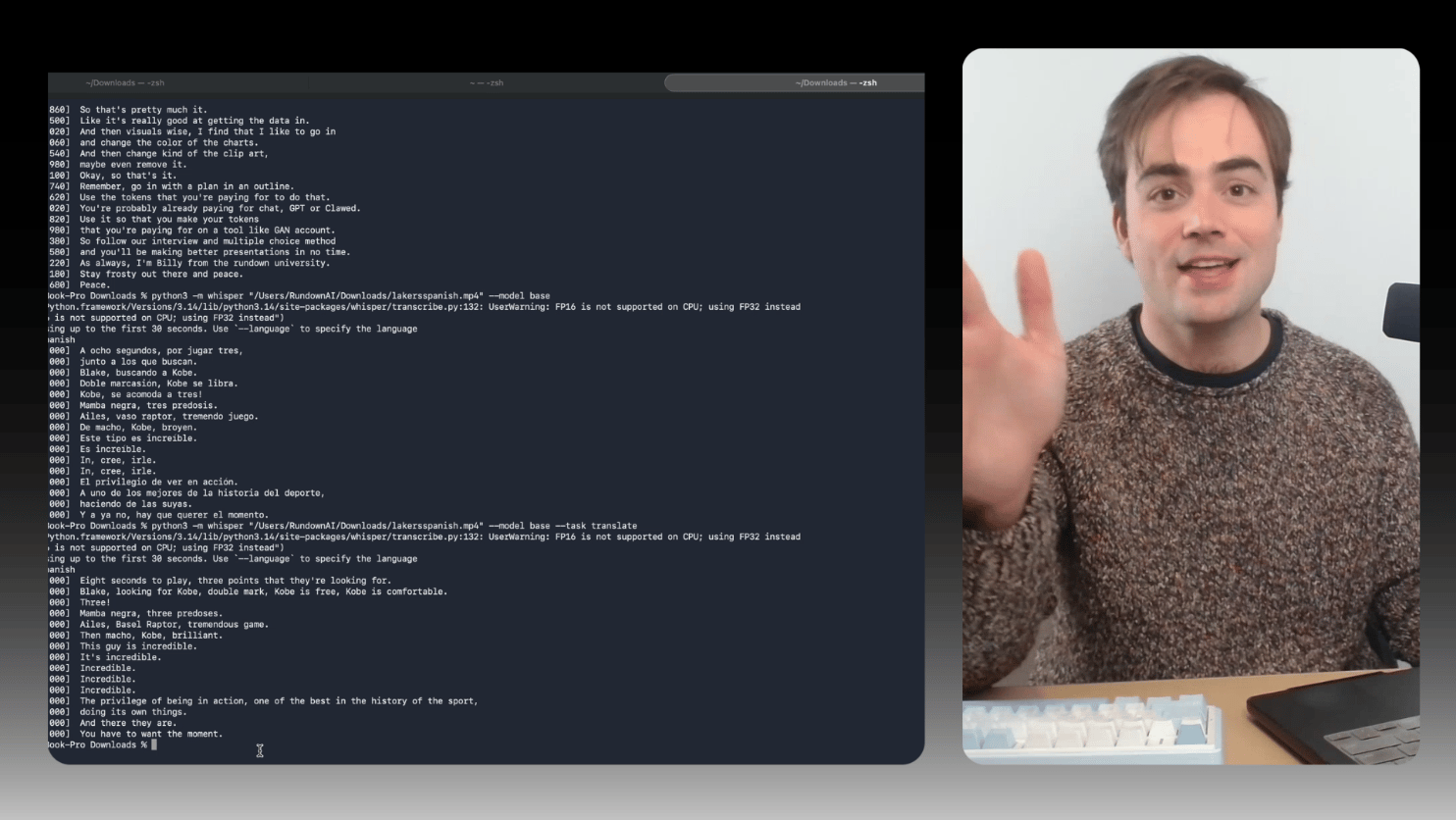

📝Transcribe any video for free with this local AI

Deploy independent offline translation alongside precise transcription spanning practically any raw video package absolutely free-of-charge utilizing locally managed LLMs rendering shady or intrusive third-party transcription hubs wholly irrelevant within this comprehensive implementation manual.

- —Boot up your machine terminal initially pushing

brew install ffmpegnatively prior to sequentially compiling your central package via thepip3 install -U openai-whispercommand string - —FFmpeg establishes foundational infrastructure allowing dynamic functional video edits executed via the terminal, whereas the underlying open-source openai-whisper model executes overarching raw speech-to-text recognition workloads exclusively

- —Finalize execution purely deploying standard parameter flags natively formatting via

python3 -m whisper your-video.mp4 --model baseto functionally isolate all necessary processing cycles exclusively utilizing the underlying localized processor nodes completely devoid of costs - —Standardized benchmarks verify that a fifteen-minute raw upload roughly executes complete processing cycles within precisely two minutes generating pristine

.txtrecords functionally paired utilizing fully timestamped.srtdelivery bundles

Why it matters: Highly technical orchestrators can effortlessly extrapolate foundational whisper parameters actively deploying specialized translation or dual-language flags straight into standard terminal executions effectively producing private, localized multi-language interpretation infrastructure independently decoupled from network architectures.

🧠Alibaba's tiny AI tops models 13x its size

Alibaba broadly distributed the "Qwen3.5 Small" suite defining an intricate quadruple-tier classification composed entirely utilizing deeply compressed open-source algorithms engineered operating natively on laptops or localized smartphone networks. Staggeringly, its apical flagship drastically overshadowed baseline reasoning metrics dominated by an OpenAI alternative roughly scaling out 1300% larger.

- —The comprehensive Qwen3.5 overarching portfolio scales from minute 0.8B (parameter) mobile builds up targeting a muscular 9B classification intended for localized offline laptops—equally accessible encompassing unrestrictive commercial open-source licensing mandates

- —Benchmark operations recorded the 9B parameter edition profoundly outmaneuvering OpenAI's gargantuan GPT-OSS-120B alternative explicitly matching graduate-level cognitive logic protocols intertwined across complex multi-lingual proficiency indexing testing

- —Entire frameworks within the four-tier architectural deployment execute complex multimodal text, image, alongside intrinsic video parsing innately. Strikingly, the mid-tier 4B variant remarkably matched granular visual examination benchmarks historically demanding hardware packages over twenty scales larger

- —Tesla CEO Elon Musk actively promoted the release vector broadcasting generalized approval stating the compressed matrix frameworks possessed unequivocally "impressive intelligence density"

Why it matters: Extreme compression models fundamentally lack overarching frontier-level deep cognition traits; however, serving baseline application integrations, generating offline data exploration matrices, mitigating cloud processing tax cycles—this localized frontier represents rapid foundational universal AI deployment realities universally empowering mainstream notebook distribution fundamentally.